Traditional IoT has a problem: devices can connect, but they can’t think. They execute commands but don’t make decisions. They log data but never learn. ESP-Claw changes that by bringing an entire AI Agent runtime directly onto Espressif chips — turning passive executors into active decision centers.

Developed by Espressif (the company behind ESP32), ESP-Claw is an open-source framework that lets you define device behavior through natural conversation. No coding required. Just chat with your device via Telegram, WeChat, Feishu, or QQ — and watch it respond, learn, and act.

What is ESP-Claw?

ESP-Claw stands for “ESP + Chat as Coding”. It’s built on the OpenClaw concept and adds:

- Chat Coding: Define behavior through IM chat + dynamic Lua loading

- Event-Driven: Any event triggers the Agent Loop — not just user messages

- Structured Memory: On-chip memory that learns and evolves

- MCP Communication: Dual Server/Client for unified protocol

- Ready Out of the Box: Web-based flashing, no compilation needed

Think of it as giving your ESP32 a brain — one that can understand natural language, make decisions, remember your preferences, and control physical devices autonomously.

Traditional IoT vs ESP-Claw: A Paradigm Shift

| Dimension | Traditional IoT | ESP-Claw |

|---|---|---|

| Processing Logic | Preset static rules (IFTTT) | LLM dynamic decisions + Lua rules |

| Execution Engine | Rule engine | LLM + Lua + Router (3-tier) |

| Control Center | Cloud server | Edge node (ESP chip) |

| Device Protocol | MQTT / Matter / proprietary | MCP as unified language |

| Memory | Cloud storage | Local structured memory (JSONL + tags) |

| Interaction | App / control panel | IM chat (Telegram/WeChat/Feishu) |

| Intelligence | Preset automation | LLM + local rules (continuous evolution) |

Key Features

1. Chat as Coding — No Programming Required

Send a message like “Turn the light to blue when motion is detected” or “Play a beep when temperature exceeds 30°C” — and ESP-Claw generates the Lua code automatically. Once verified, the logic persists as a local rule for deterministic execution, even offline.

2. Event-Driven Architecture — Millisecond Response

Unlike traditional polling-based systems, ESP-Claw uses a local event bus. Sensors report events in real-time, triggering Lua rules instantly. If no local rule matches, the Agent automatically calls an LLM for dynamic decision-making. This ensures millisecond-level response for time-critical applications.

3. MCP Protocol — Unify Everything

ESP-Claw implements the Model Context Protocol (MCP) with dual identity:

- As MCP Server: Exposes hardware (sensors, actuators) as standard MCP Tools — callable by OpenClaw, Claude, Codex, or any MCP-compatible Agent

- As MCP Client: Calls external services — query maps, send notifications, integrate with cloud APIs

This eliminates protocol silos and makes every device interoperable.

4. On-Chip Local Memory — It Remembers You

ESP-Claw implements a complete structured long-term memory system directly on the MCU:

- 5 Memory Types: Profile, preference, fact, event, rule

- Summary-Tag Retrieval: Lightweight mechanism — no vector database needed

- Automatic Evolution: Learns from dialogue, events, and behavior patterns

- Privacy First: All data stays on-device, never leaves the chip

Tell ESP-Claw “I prefer concise answers” — and it remembers. Ask “Do you remember my name?” — it knows.

5. Cloud-Edge Collaboration

For high-real-time tasks, local Lua rules execute directly. For complex reasoning (like image recognition), it automatically offloads to the cloud and returns results. You get the best of both worlds: local speed + cloud intelligence.

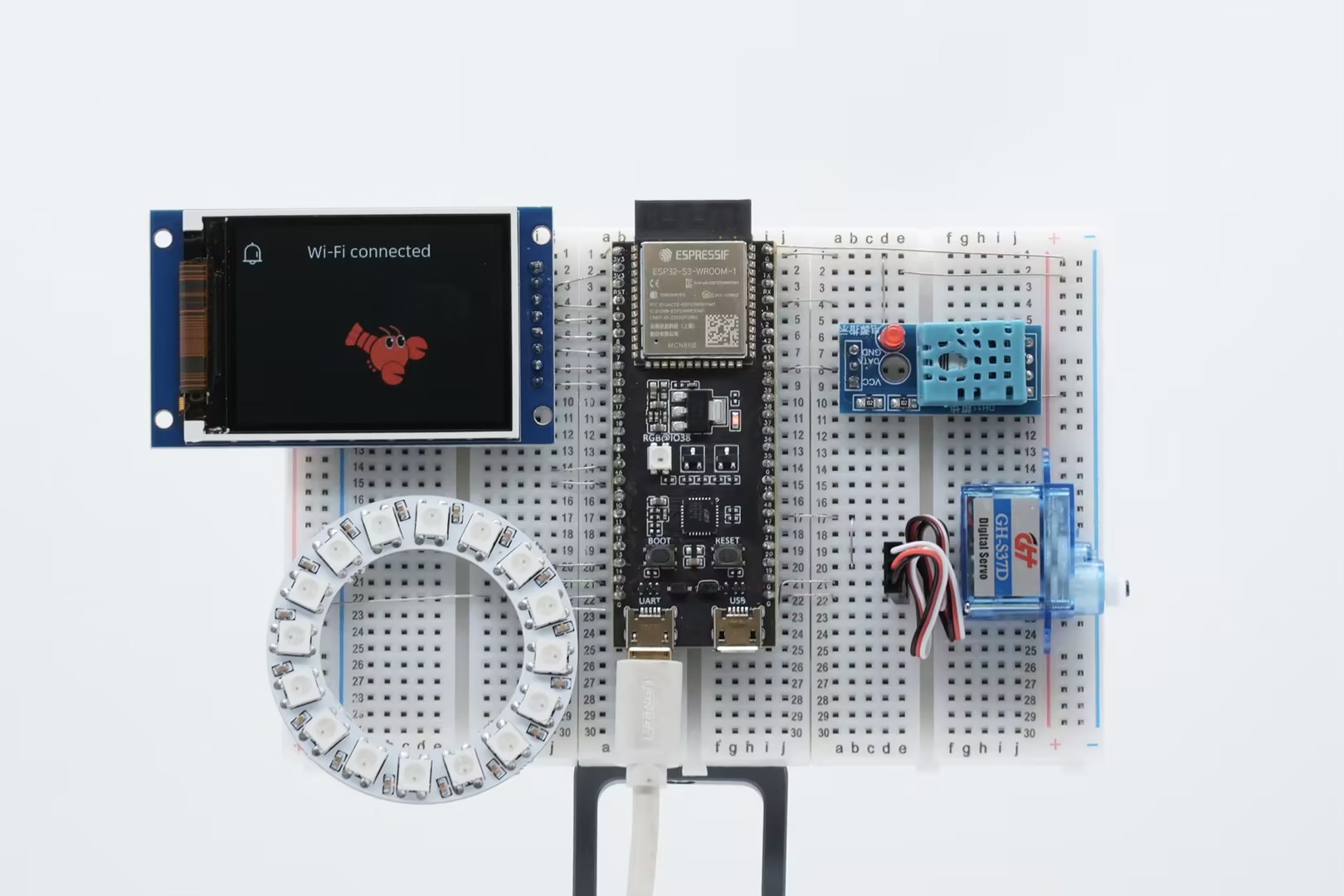

Supported Chips

Currently, ESP-Claw requires:

- Minimum: 8MB Flash + 8MB PSRAM

- Supported: ESP32-S3 (primary), ESP32-P4 (adapting)

The ESP32-S3 is ideal thanks to its dual-core Xtensa architecture, USB OTG support, and RISC-V ultra-low-power co-processor.

Supported IM Platforms

Interact with ESP-Claw through your favorite messaging app:

- Telegram — via Bot API

- WeChat — via ClawBot

- Feishu (Lark) — via App integration

- QQ — via OpenClaw bot

Example Commands

Everyday Q&A:

- “Hi, what capabilities do you currently have?”

- “Any AI news lately?”

- “At 12% annual interest, what will $10,000 become after 3 years?”

Hardware Control:

- “Turn the light on” / “Set the light to red”

- “Draw Hello, ESP-Claw! on the display”

- “Take a photo and send it to me”

- “Play a 1s 440Hz beep”

Reminders:

- “Remind me to drink water every day at 7am”

- “Remind me to sleep at 9pm every day”

- “Remind me to clean every Saturday at 8am”

Memory:

- “Remember that I prefer concise answers”

- “Do you still remember my name?”

Supported Hardware & Peripherals

ESP-Claw supports a wide range of sensors and actuators through ESP-IDF components:

- Display: LCD, Touch screens, e-Paper

- Camera: OV2640, OV3660, and other ESP32-compatible cameras

- Audio: Microphones, speakers, audio playback

- LEDs: LED strips (WS2812B, SK6812), single LEDs

- Sensors: Temperature, humidity, motion (PIR), light, gas, etc.

- Actuators: Motors, relays, servos

Software Architecture

ESP-Claw follows a modular architecture:

- Application Layer: basic_demo and custom applications

- Runtime Core: LLM + Lua + Router (3-tier event handling)

- Capability Plugins: lua_module_display, lua_module_camera, etc.

- Hardware Extensions: Driver integrations via ESP-IDF

How to Get Started

Getting ESP-Claw running takes minutes:

- Prepare Hardware: ESP32-S3 dev board (8MB+ Flash, 8MB+ PSRAM)

- Connect: USB cable to your computer

- Web Flash: Open esp-claw.com/en/flash in Chrome/Edge

- Configure: Wi-Fi, LLM API key (GPT/Qwen/Claude), IM platform

- Flash: One-click firmware upload

- Chat: Start talking to your device!

No compilation. No tooling setup. Just a browser and a board.

Use Cases

What can you build with ESP-Claw?

Smart Home Assistant

A voice-controlled home automation hub that learns your habits and controls lights, fans, and appliances through conversation.

Environmental Monitor

Monitor temperature, humidity, CO2, and air quality. Get alerts when thresholds are exceeded — all locally.

Interactive Display

Build a smart dashboard that shows weather, calendar, and notifications — controllable via chat.

AI Pet Camera

Take photos, analyze them with AI, and send results to your phone. All processing happens on-device where possible.

Custom Games

Generate pixel-art mini games via chat. Let your ESP32 play games with you!

Learning Robot

Build a balancing robot that iteratively improves its algorithm through conversation and self-experimentation.

Resources

- Website: esp-claw.com

- GitHub: github.com/espressif/esp-claw

- Documentation: esp-claw.com/en/tutorial/

- Web Flasher: esp-claw.com/en/flash

Conclusion

ESP-Claw represents a fundamental shift in how we think about IoT devices. Instead of dumb sensors that wait for cloud commands, it creates edge AI agents that can:

- Understand natural language

- Make decisions locally

- Learn from interactions

- Control physical devices

- Run offline when needed

At its core, ESP-Claw asks: What if your devices could think?

For makers, developers, and smart home enthusiasts, ESP-Claw offers a compelling vision: IoT that doesn’t just connect — it understands.

Ready to try? Head to esp-claw.com/en/flash and flash your first ESP32-S3 in minutes.